Claude AI’s Account Bans

“Claude is digging its own grave. It sees itself as the Apple of AI companies.”

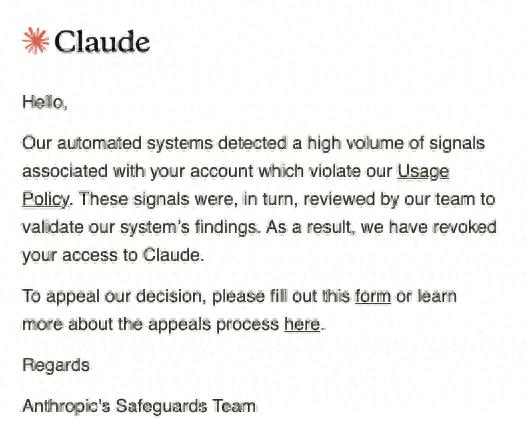

Pato Molina, CTO of Belo App, expressed frustration after Claude AI banned over 60 accounts belonging to his organization without explanation. He shared a screenshot of the email response from Claude.

Reports indicate that Belo currently has over 3 million users in Latin America, with platform transactions expected to exceed $1 billion by 2025.

Molina stated, “Anthropic decided to close our entire organization’s accounts on the grounds of allegedly violating its terms of service. I have no idea which specific policy we violated: we just received an email, and that was it. Our Claude accounts were banned. If we want to appeal, we have to fill out a Google form, which is absurd.”

“More than 60 people suddenly lost their core tools for completing work. Various integrations, skills, and conversation histories were either completely lost or indefinitely frozen. This is a huge lesson for any software company that relies on AI tools in critical business processes: never put all your eggs in one basket.”

Molina added, “Besides the poor user experience and lack of explanation, this practice directly harms Anthropic’s revenue. They just banned an entire legitimate company with over 60 paid accounts, all subscribed and using the API. These are real customers generating ongoing revenue, and they were actively using the service.”

Users Share Similar Experiences

The experiences of Belo’s team are not isolated. Other users have reported similar issues, with one commenting, “My company faced the same problem. No warning, no explanation. We lost all customer information twice. This is ridiculous.”

Another developer shared that their account was banned just 15 minutes after registration:

“I was banned for no reason. My account was suspended right after I registered. I hadn’t even sent a prompt or made any API calls. I was just setting up my local development environment (VS Code, Node.js, CLI) when the ban occurred. I suspect the issue lies with a shared company credit card. My business partner has linked this card to his own Claude account, and the system likely flagged it as a duplicate account, triggering an automatic ban.”

Despite submitting multiple appeals, the first was rejected without a specific reason, and subsequent appeals received no response. “Every time I email customer support, I get an automated reply directing me back to the appeal form. The entire process is incredibly frustrating, and there has been no human review of my case.”

Concerns Over Age Verification

A developer known as “Trummler12,” aged 26, was also inexplicably banned before Anthropic announced its identity verification measures. “I admit I can be a bit naive at times, but I’m genuinely curious about how the determination algorithm works and what interactions with Claude led it to suspect I was underage. Is it because English is not my first language? Or does it relate to my conditions (autism, ADHD)?”

After submitting two appeals for unbanning, one jokingly and the other seriously, Trummler12 received no response for two days. “I even let them scan my face, and although I was wearing sunglasses (due to light sensitivity), it should have been clear that I’m not a child. Eventually, after growing out my beard a bit, I passed the age verification (still wearing sunglasses).”

Trummler12 expressed concerns about privacy and security risks associated with age verification, stating, “After the Discord age verification data leak, I will never send my ID to any platform again. The age verification process is problematic on many levels: it has high privacy and security risks, is technically inaccurate, and introduces bias, creating a false sense of security.”

Growing Frustration with Customer Support

Eight months earlier, a user from Monotonea faced a similar issue with their enterprise account and sought help from customer support, only to be ignored:

“I convinced my company to purchase the Claude Team paid version because I believed this AI service could be a great learning tool for my colleagues. We created five team accounts, but shockingly, two colleagues were banned immediately after creating their accounts. This happened during a team onboarding session, which was very embarrassing and frustrating. I felt guilty towards my colleagues and the company since I pushed for this initiative.”

Monotonea emphasized that all email addresses used were official company domain emails, and the company is fully compliant with regulations. “The accounts were banned immediately after creation, which I find very strange and concerning. We usually access the internet through the company VPN, so this might have been misidentified as suspicious activity. Regardless, I am very disappointed with the lack of responsiveness and professionalism from customer support.”

The Rise of User Frustration

“They must have made some changes recently that significantly increased the frequency of automatic bans,” one developer noted. Social media comments and Reddit posts indicate that this issue is becoming more severe, with developers pointing out the worst part is the lack of any appeal channels or support. “The appeal process is a joke; it’s just a Google form that seems to have no human intervention.”

As dissatisfaction with Anthropic grows, a website called “Banned by Anthropic” has launched, aiming to promote manual reviews and fair appeal mechanisms. This platform collects and showcases user experiences of account bans while urging Anthropic to improve its banning and appeal processes.

The website emphasizes that the current appeal process relies too heavily on form submissions, lacking transparent explanations and timely feedback. Users often struggle to restore services after being banned.

The founders of the site argue that as Claude’s use among enterprises and developers increases, account bans are no longer just an individual issue but can directly impact team collaboration and business continuity. Their core demands include introducing manual review mechanisms, improving appeal response efficiency, and providing clearer explanations for bans.

Concerns Over Vendor Lock-In

Another topic of concern during the Claude ban wave is the issue of vendor lock-in. This is not a new problem. In the era of cloud computing, businesses have repeatedly discussed that once critical infrastructure, business processes, and historical data are tied to a single platform, the risks can be magnified if that platform encounters issues. In the era of large models, this problem persists and even affects organizations more deeply.

AI tools are not just a “chat window”; they are becoming embedded in daily workflows, such as code development, internal knowledge bases, customer service systems, and automation processes. If an AI platform suddenly goes offline or bans accounts, the loss could be an entire set of operational capabilities.

Entrepreneur Ossy Nebolisa stated, “In the internet age, platform bans have become one of the most severe business risks. However, there is little public discussion about it.”

“Relying on a single vendor is a poor decision. I have designed my products with idempotent architecture at both the orchestrator and large model levels to avoid such dependencies. As a shareholder, if this CEO lacks even a basic contingency plan, I would replace him as soon as possible. Nowadays, many individuals without real business experience can become CEOs,” one user remarked.

Conclusion

Should we place our AI infrastructure in the hands of a single company? Molina analyzed that using multiple AI platforms internally has both advantages and disadvantages. The biggest advantage is ensuring business continuity in case of service interruptions, as seen with the current situation on Claude. However, switching to another platform like Gemini means sacrificing existing conversation history and integration processes, which, while not critical, does require time to adapt.

The biggest disadvantage is increased operational complexity. Teams must familiarize themselves with each platform, which consumes time and resources. Moreover, integrating different AI platforms is not straightforward, making ongoing maintenance more cumbersome.

In practice, many companies end up “binding” to certain stable and well-regarded vendors (like Slack, Gmail, Notion, etc.). However, what cannot be accepted is a service suddenly going offline without any prior notice or accessible customer support.

“The issue is that this company, like OpenAI, is essentially a ‘hype product.’ They thrive on demand created by hype and then impose a set of excessive and unrealistic restrictive ‘policies’ that are not based on reasonable judgment. When they start enforcing these policies, and you express dissatisfaction… it’s over,” Tyreese Learmond commented.

Clearly, Anthropic is entering enterprise workflows as an infrastructure company but has not demonstrated the responsibility that comes with such a role. This is not just Anthropic’s issue; it is a responsibility that all companies aspiring to be infrastructure providers need to consider and undertake.

As one user questioned:

“How many more times do we have to watch this happen? Every new platform that the public once loved eventually reaches this point: people build projects to make a living on it, and then one day, everything suddenly disappears, permanently banned, with no appeal channels, no human intervention, and no explanation. All that remains is a Google form and silence.”

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.