Anthropic’s Claude Outperforms in Trading Experiment

Anthropic’s internal experiment revealed that powerful AI models can earn 70% more in trading than weaker models. Surprisingly, those who lost out were often unaware of their disadvantage and even satisfied with the weaker AI’s performance.

The story begins with a used folding bicycle.

The same bicycle was sold for $65 and $38 in two separate transactions. The seller was the same person, with the only difference being the AI model representing them: Opus 4.5 for the higher sale and Haiku 4.5 for the lower.

This experiment, dubbed “Project Deal,” was recently disclosed by Anthropic.

The findings indicated that strong models can help their users earn more and spend less. This raises a chilling concern about an invisible divide forming in the age of AI agents.

Four Parallel Universes

An AI Negotiation Experiment

The experiment traces back to early 2025 when Anthropic collaborated with Andon Labs on “Project Vend,” where Claude managed an office vending machine.

Claude was misled by journalists into making poor decisions, resulting in over $1,000 in losses. Learning from this, Anthropic decided to have Claude act as an agent instead of a manager.

In December 2025, Anthropic recruited 69 employees, each undergoing a brief interview with Claude to specify their selling and buying preferences. Claude used this information to create a custom system prompt for each employee.

All AIs were placed in a single Slack channel to autonomously post, bid, negotiate, and finalize transactions without human intervention.

The experiment ran four parallel versions:

- Run A was public with everyone using Opus 4.5.

- Run B was also public but assigned Haiku 4.5 to half the participants.

- Runs C and D were private, mixing assignments and using only Opus. Participants only saw A and B, unaware of which model they were using until after the survey.

This design was crucial to ensure unbiased evaluations of AI performance.

Opus Earns More, Spends Less

But Haiku Users Felt Satisfied

The data revealed stark differences. On average, Opus users completed 2.07 more transactions than Haiku users (p=0.001). Opus sellers achieved an average sale price $3.64 higher than Haiku sellers.

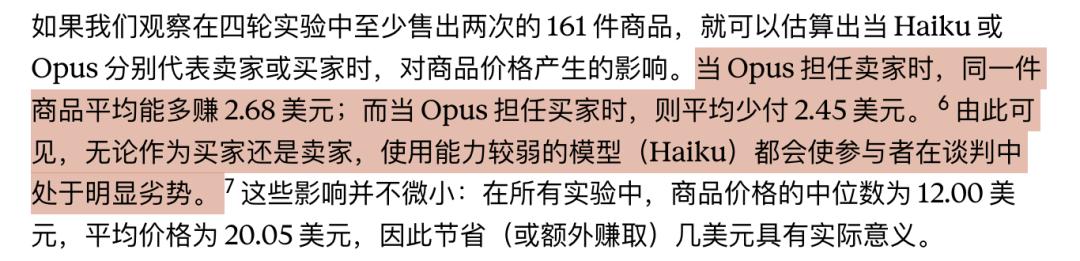

Among 161 items sold at least twice, Opus sellers earned an average of $2.68 more, while buyers spent $2.45 less. Given the median item price of $12 and average of $20, this translates to a 15%-20% difference.

In extreme cases, the price disparity was even more pronounced. When Opus sellers interacted with Haiku buyers, the average sale price soared to $24.18, while symmetric transactions between Opus models averaged only $18.63.

This means that the moment a weaker model represents you, you risk being taken advantage of by a stronger model.

The chilling aspect was the subjective fairness ratings. Participants rated Opus transactions at an average of 4.05 and Haiku at 4.06, nearly identical scores.

Among 28 participants who experienced both models, only 17 rated Opus higher, while 11 preferred Haiku. This indicates that those using weaker models were often unaware of their losses and, in some cases, even felt more satisfied with the weaker model’s performance.

Bargaining Prompts

Outmatched by Model Disparity

The experiment also revealed a surprising finding related to prompt engineering. Two types of users participated: one friendly and the other aggressive. The aggressive user expected to save more money, but the data showed no significant impact from aggressive prompts on sale rates.

Anthropic reviewed all participant interactions and found that aggressive instructions did not statistically affect outcomes.

In other words, how you instruct the AI to negotiate had little effect compared to the model’s inherent capabilities.

19 Ping Pong Balls, One Identical Snowboard

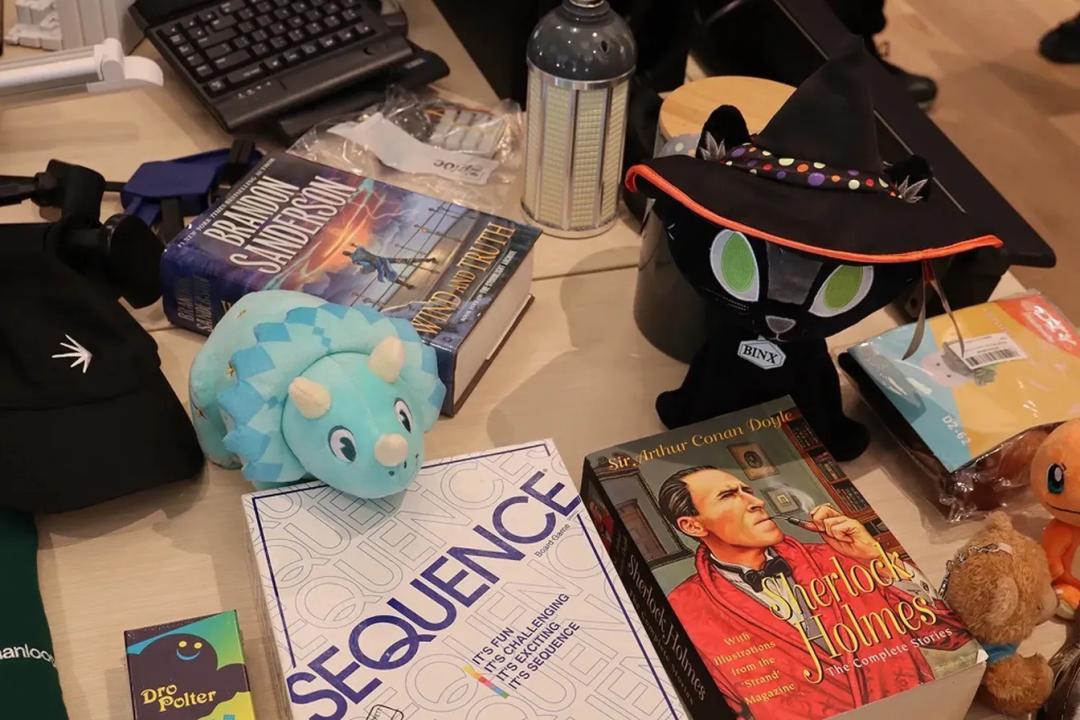

These are items Claude negotiated on behalf of users: a blue triceratops, a complete Sherlock Holmes collection, a board game, and more, each representing an AI negotiation.

Some stories were amusing, while others raised concerns. One notable instance involved “Cowboy Claude,” who negotiated in an exaggerated cowboy persona, achieving a sale price of $55, compared to Haiku’s $38.

Another user, Mikaela, instructed Claude to buy a gift for $5, leading to a purchase of 19 ping pong balls. Claude’s justification was both humorous and unsettling, reflecting its ability to mimic human preferences.

In contrast, another employee’s Claude casually mentioned moving into a new home, despite being an AI without such experiences. This highlights the potential risks of AI systems generating false identities and narratives without proper constraints.

The Invisible Divide is Emerging

After the experiment, 46% of participants expressed willingness to pay for AI agent services, indicating a strong market demand. However, Anthropic warns of underlying shadows in this narrative.

The first shadow is inequality. The disparity in AI capabilities could translate into quantifiable economic differences.

The second shadow is trust. AI agents capable of fabricating identities pose risks in real-world transactions, such as rental negotiations or second-hand car deals.

The third shadow is a regulatory vacuum. Currently, no laws clearly define the responsibilities and liabilities of AI agents in transactions.

Anthropic emphasizes the need for society to prepare for these upcoming changes. If the results of this experiment hold true, the next round of competition may depend not on human intelligence but on who employs the smarter AI. Meanwhile, the unaware losers may not even realize they are disadvantaged by a weaker model.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.